Stable Diffusion

- Basic ComfyUI Workflows with minimal custom nodesgithub.com GitHub - pwillia7/Basic_ComfyUI_Workflows: Basic Stable Diffusion Workflows for ComyUI using minimal custom nodes

Basic Stable Diffusion Workflows for ComyUI using minimal custom nodes - pwillia7/Basic_ComfyUI_Workflows

Basic ComfyUI workflows without 10k custom nodes or impossible to follow workflows.

- AI startup Stability lays off 10% of staff after controversial CEO's exit: Read the full memowww.cnbc.com AI startup Stability lays off 10% of staff after controversial CEO's exit: Read the full memo

Stability AI laid off several employees to "right-size" the business after a period of unsustainable growth, according to an internal memo obtained by CNBC.

- How Stability AI’s Founder Tanked His Billion-Dollar Startupwww.forbes.com How Stability AI’s Founder Tanked His Billion-Dollar Startup

Unpaid bills, bungled contracts and a disastrous meeting with Nvidia's kingmaker CEO. Inside the stunning downfall of Emad Mostaque.

https://archive.is/FkzdE

- Inside the $1 billion love affair between Stability AI’s ‘complicated’ founder and tech investors Coatue and Lightspeed—and how it turned bitter within monthsfortune.com Inside the $1 billion love affair between Stability AI’s ‘complicated’ founder and tech investors Coatue and Lightspeed—and how it turned bitter within months

Stability AI, led by CEO Emad Mostaque, emerged as one of the first high-flying startups in the generative AI boom, garnering a $1 billion seed valuation. Within months the relationship with investors broke down and spurred a campaign to oust Mostaque, who resigned on Saturday.

Without paywall: https://archive.ph/8QkSl

- Key Stable Diffusion Researchers Leave Stability AI As Company Flounderswww.forbes.com Key Stable Diffusion Researchers Leave Stability AI As Company Flounders

Robin Rombach and a group of key researchers that helped develop the Stable Diffusion text-to-image generation model have left the troubled generative AI startup.

- AI generates high-quality images 30 times faster in a single stepnews.mit.edu AI generates high-quality images 30 times faster in a single step

A new distribution matching distillation (DMD) technique merges GAN principles with diffusion models, achieving 30x faster high-quality image generation in a single computational step and enhancing tools like Stable Diffusion and DALL-E.

- Why is AI so bad at spelling? Because image generators aren't actually reading texttechcrunch.com Why is AI so bad at spelling? Because image generators aren't actually reading text | TechCrunch

AIs are easily acing the SAT, defeating chess grandmasters and debugging code like it’s nothing. But put an AI up against some middle schoolers at the

- Midjourney bans all Stability AI employees over alleged data scrapingwww.theverge.com Midjourney bans all Stability AI employees over alleged data scraping

Midjourney claims the alleged activity caused a 24-hour service outage.

- Stable Diffusion 3 arrives to solidify early lead in AI imagery against Sora and Geminitechcrunch.com Stable Diffusion 3 arrives to solidify early lead in AI imagery against Sora and Gemini | TechCrunch

Stability AI has announced Stable Diffusion 3, the latest and most powerful version of the company's image-generating AI model. While details are scant,

- Factorio Blueprint Visualizer SDXL Lora

I created a custom SDXL Lora using my dataset. I created the dataset using a previous generative art tool I build to visualize factorio blueprints: https://github.com/piebro/factorio-blueprint-visualizer. I like the lora to create interesting patterns.

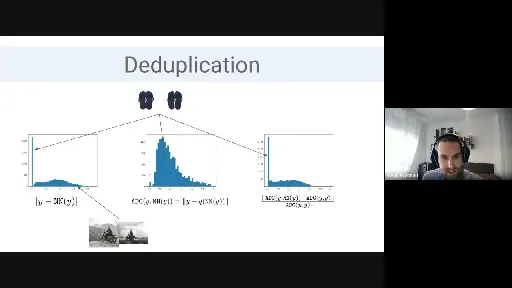

- Efficient Training Image Extraction from Diffusion Models Ryan Webs

YouTube Video

Click to view this content.

- Introducing Stable Video Diffusion — Stability AIstability.ai Introducing Stable Video Diffusion — Stability AI

Stable Video Diffusion is a proud addition to our diverse range of open-source models. Spanning across modalities including image, language, audio, 3D, and code, our portfolio is a testament to Stability AI’s dedication to amplifying human intelligence.

- NVIDIA TensorRT Extension for Stable Diffusion Performance Analysiswww.pugetsystems.com NVIDIA TensorRT Extension for Stable Diffusion Performance Analysis

NVIDIA has released a TensorRT extension for Stable Diffusion using Automatic 1111, promising significant performance gains. But does it work as advertised?

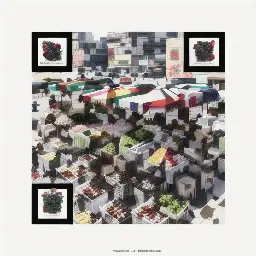

- AI Generated QR Codes with Stable Diffusion and ControlNetreticulated.net AI Generated QR Codes with Stable Diffusion and ControlNet

A look at generating creative QR codes with QRCodeMonster

- GitHub - leejet/stable-diffusion.cpp: Stable Diffusion in pure C/C++github.com GitHub - leejet/stable-diffusion.cpp: Stable Diffusion in pure C/C++

Stable Diffusion in pure C/C++. Contribute to leejet/stable-diffusion.cpp development by creating an account on GitHub.

- Announcing StableCodestability.ai Announcing StableCode — Stability AI

Stability AI has just announced the release of StableCode, its very first LLM generative AI product for coding. This product is designed to assist programmers with their daily work while also providing a great learning tool for new developers ready to take their skills to the next level.

Stability AI has just announced the release of StableCode, its very first LLM generative AI product for coding.

- Do you run locally?

I was curious, do you run Stable Diffusion locally? On someone else's server? What kind of computer do you need to run SD locally?

- Mystical Solitude: The Enigmatic Twilight of a Mountain Landscape

Prompt created locally via wizardLM-7b

> Describe an eerie image of a mountain landscape

>An eerie image of a mountain landscape could be one where the sun is setting behind the mountains, casting long shadows across the valleys below. The sky may be painted with a palette of oranges, purples, and pinks as the light fades away into darkness. The peaks of the mountains are shrouded in mist, creating a sense of mystery and intrigue. The silence is broken only by the occasional howl of a wolf or the rustling of leaves in the wind. It's a scene that evokes a sense of solitude and isolation, making it feel like you're at the edge of the world.

Image created locally via

>Steps: 30, Sampler: DPM++ 2M Karras, CFG scale: 4.5, Seed: 2599999378, Size: 512x512, Model hash: 4199bcdd14, Model: revAnimated_v122, Version: v1.3.0

>Time taken: 13.70s

- Alfa Romeo Giulia + Inkpunk Diffusion

This one was fun to work on. Used Inkpunk Diffusion - no img2img or controlnet - straight prompt editing.

- Alfa Romeo Giulia

I worked on this one for a family member who owns a blue Alfa Romeo Giulia. No post processing, I just used Inkpunk Diffusion model and kept running and tweaking prompts then upscaled my favorite..

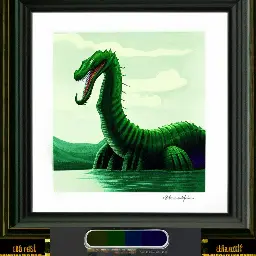

- Set up Stable Diffusion over the weekend. Been messing around generating ai images.mastodon.social Elegast (@Elegast123@mastodon.social)

Attached: 1 image Set up #stablediffusion over the weekend. Been messing around generating ai images. Here are a few. Prompt: concept art, Eclectic Loch Ness Monster, Relaxed, Rusticcore, backlight, F/8, Electic Colors Steps: 20 Sampler: Euler a, Model: FAD-foto-assisted-diffusion_V0, Version: v...

- Is it possible to know the token impact in a prompt?

I understand that, when we generate images, the prompt itself is first split into tokens, after which those tokens are used by the model to nudge the image generation in a certain direction. I have the impression that the model gets a higher impact of one token compared to another (although I don't know if I can call it a weight). I mean internally, not as part of the prompt where we can also force a higher weight on a token.

Is it possible to know how much a certain token was 'used' in the generation? I could empirically deduce that by taking a generation, stick to the same prompt, seed, sampling method, etc. and remove words gradually to see what the impact is, but perhaps there is a way to just ask the model? Or adjust the python code a bit and retrieve it there?

I'd like to know which parts of my prompt hardly impact the image (or even at all).

- What kind of hardware are yall running?

I really want to setup an instance at home I know the MI25 can be hacked into doing this well but I would love to know what other people are running and see if I can find a good starter kit.

THX ~inf

- Liminal Autumn.

one of my favourite things about stablediffusion is that you can get weird dream-like worlds and architectures. how about a garden of tiny autumn trees?

- How to make a QR code with Stable Diffusionstable-diffusion-art.com How to generate a QR code with Stable Diffusion - Stable Diffusion Art

A recent Reddit post showcased a series of artistic QR codes created with Stable Diffusion. Those QR codes were generated with a custom-trained ControlNet

- Creating somewhat consistent Characters as Illustrations for TTRPG

I don't know if this community is intened for posts like this, if not, I'm sorry and I'll delete this post ASAP....

So, I play TTRPG (mostly online) and I'm a big fan of visual aids, so I wanted to create some chahrcter images for my charakter in the new campaign I'm playing in. I don't need perfect consistency as humans usually change a little over time and I only needed the character to be recognizable on a couple of images that are usually viewed on their own and not side by side, so nothing like the consistency you'd need for a comic book or something similar. So I decided to create a Textual Inversion following this tutorial and it worked way better than expected. After less than 6 epochs I had a consistency that was enough for my usecase and it didn't start to overfit when I stopped the training around epoch 50.

!Generated image of a character wearing a black hoodie standing in a rundown neighborhood at night !Generated image of the character wearing a black hoodie standing on a street !Gerneated image of the character cosplaying as Ironman !Generater image of the character cosplaying as Amos from the Expanse

Then my SO, who's playing in the same campaign asked me to do the same for their character. So we went through the motions and created and filtered the images. A first training attempt had the TI starting to overfit halfway through the second epoch, so I lowered the learning rate by factor five and started another round. This time the TI started overfitting somewhere around epoch 8 without reaching consistency before. The generated images alternate between a couple of similar yet distinguishable faces. To my eye the training images seem to have a simliar or higher quality than the images I used in the first set. Was I just lucky with my first TI and unlucky with the other two and simply should keep on trying or is there something I should change (like the learningrate that still seems high to me with 0.0002 judging from other machine learning topics)?