AI Go bots not so superhuman after all

AI Go bots not so superhuman after all

AI Go bots not so superhuman after all

AI Go bots not so superhuman after all

AI Go bots not so superhuman after all

The usual AI pumpers have suggested the bots are superhuman!

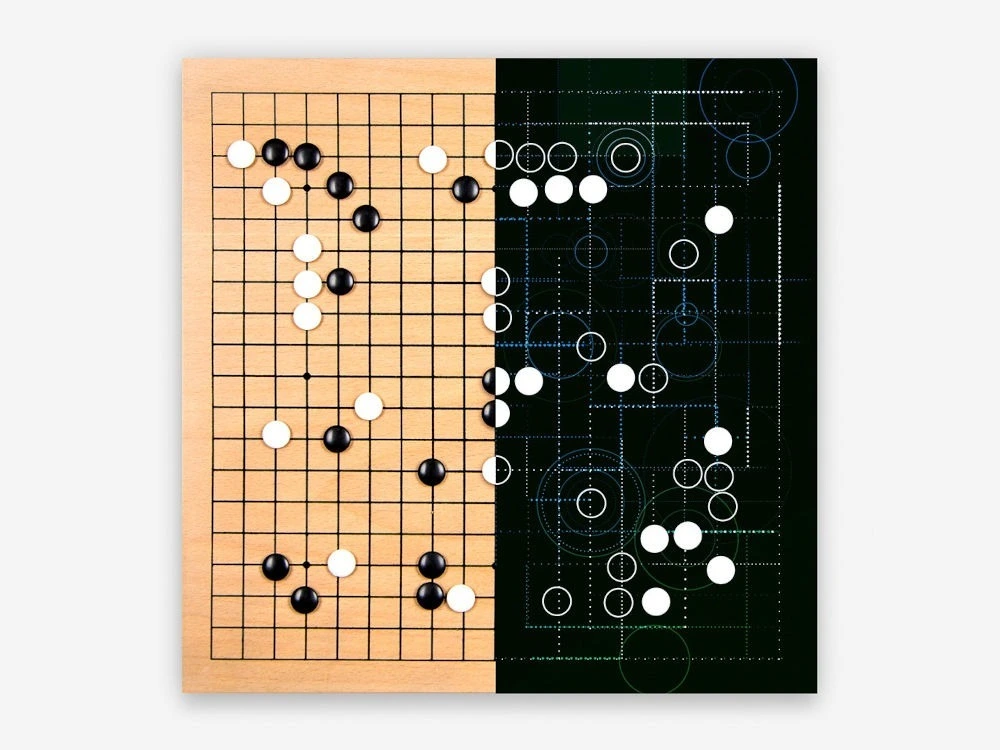

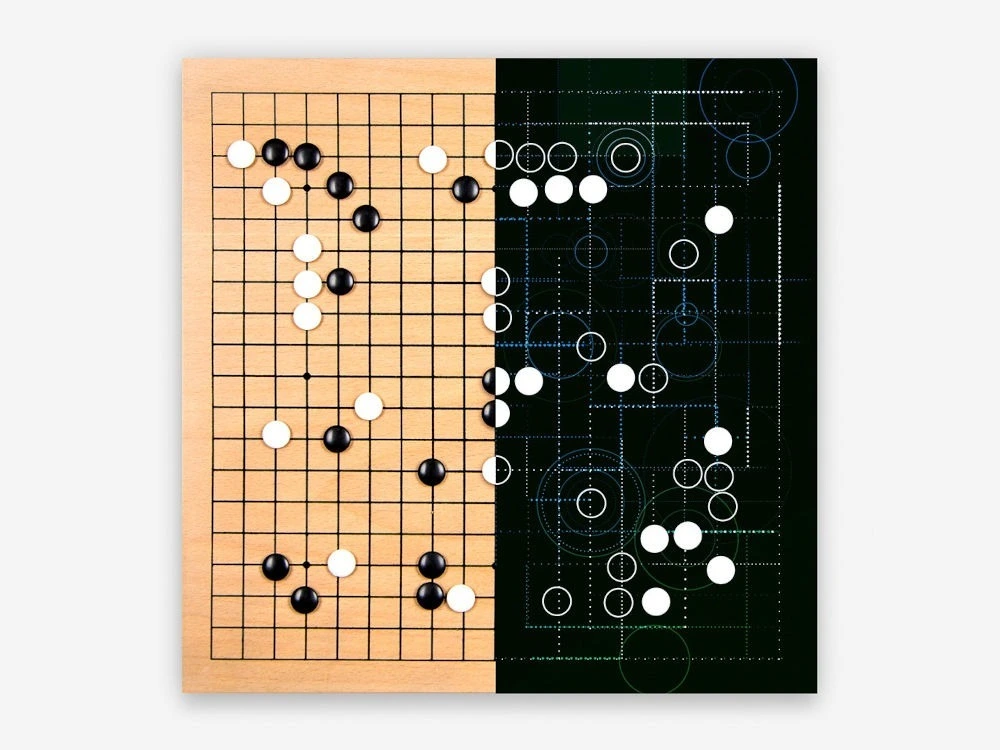

They are technically correct. The AI is superhuman when it comes to playing Go, by most measures. I don't know about "far" superhuman - the usual type of machine wins against professional human players every single time if the humans are trying to play well, but it's not as if it's off in another dimension playing moves we could never possibly comprehend. Many strong Go players probably still disagree with me there, but it is at least not as unlikely as some assumed at first that we can understand what it's doing. Its moves can usually be analysed and understood with enough effort, and where they can't the difference measured in points won or lost in the game is often small. Their main advantage is being inhumanly precise, never making the kind of small errors in judgement that humans always do. Over the course of a lengthy game of Go that gradually adds up to an impressively large margin of victory.

Katago is not an artificial general intelligence. It is a Go-playing intelligence. And this class of flaw that's been found in it is due to the particular algorithm it uses (essentially the same one as AlphaGo.) It lacks basic human common sense, having found no need or ability to develop that in its training. Where humans playing the game can easily count how much space a group has and act accordingly, the program has only its strict Monte Carlo-based way of viewing the game and has no access to such basic general-purpose tools of reasoning. It can only consider one move at a time, and this lets it down in carefully constructed situations that do not normally occur in human play, since humans wouldn't fall for something so stupid.

Its failing is much more narrow than those of the LLM chatbots that everyone loves so much, but not so different in character. The machines are super-humanly good at the things they're good at. That's not too surprising; so is a forklift. When their algorithms fail them, in situations that to naive humans appear very similar to what they're good at, they're not. When it works, it's super-human in many ways. When it goes wrong, it's often wrong in ways that seem obviously stupid.

I suspect that this problem the machines have with Go playing would be an excellent example for the researchers to work with, since it's relatively easy to understand approximately why the machines are going wrong and what sort of thing would be required to fix it; and yet it's very difficult to actually solve the problem general way through the purely independent training that was the great achievement of AlphaGo Zero rather than giving up and hard-coding a fix for this one thing specifically. With the much more numerous and difficult failure modes they have to work with, the LLM people lately seem busy hacking together crude and imperfect fixes for one thing at a time. Maybe if some of them have time to take a break from that, they could learn something from the game of Go.

technically superhuman, the best kind of superhuman!

we could worry about a super-forklift leveraging its technically superhuman abilities to go FOOM, except that's "Killdozer" and I don't know if Eliezer's read that one.

The machines are super-humanly good at the things they’re good at. That’s not too surprising; so is a forklift.

Amazing quote, I'm gonna steal it.

Where is my Big Forklift lobby. What's your P(doom) from forklifts lifting us so high we escape the atmosphere and all die. Should there be a ban on forklift development?

already too late, forklifts move around most of military weapons including nuclear warheads

the most critical areas of our society have been infiltrated by forklifts

Wouldn't a truly dangerous nuclear warhead forklift itself? Oh my god... Is the singularity all of us merging with forklifts?

The AI is superhuman when it comes to playing Go, by most measures.

Except beating humans, apparently.

Yeah, aside from everything else it's very satisfying to see the humans win this one.

It had a winning record for like 8 years in a row before humans found a strategy that beats it, that seems pretty good.

Humans can't beat AI at Go, aside from these exploits that we needed AI to tell us about first.

Lee Sedol managed to win one game against AlphaGo in 2016 (and AlphaGo Zero was beating AlphaGo 100-0 a year later). That was basically the last time humans got on the scoreboard.

lol

Humans can’t beat AI at Go, aside from these exploits

kek, reminds me of when I was a wee one and I'd 0 to death chain grab someone in smash bros. The lads would cry and gnash their teeth about how I was only winning b.c. of exploits. My response? Just don't get grabbed. I'd advise "superhuman" Go systems to do the same. Don't want to get cheesed out of a W? Then don't use a strat that's easily countered by monkey brains. And as far as designing an adversarial system to find these 'exploits', who the hell cares? There's no magic barrier between internalized and externalized cognition.

Just get good bruv.

I appreciate this perspective, especially

There’s no magic barrier between internalized and externalized cognition.

I think it's increasingly clear that cognition is networking, and no matter how you are constructed, it's both internal and external, and that in a sense, the objects aren't the important thing (the relationships are).

Like, maybe there aren't shortcuts. If you want perfect GO play you may very well have to pay the full inductive price. And even then, congrats, but GO still exists.

It's interesting to see how Chess has continued to be relevant, hell, possibly even more popular than its ever been, due to increased accessibility, alternative formats, and embracing the performance aspects of the game.

did you know that humanity has been staring at numbers and doing math for millennia, and yet we still pay mathematicians? fucking outrageous, right? and yet these wry fuckers still end up finding whole new things! things in areas we've known about for centuries! the nerve of them! didn't they know we have computers to look into this now?!

You're arguing against a point I'm not making.

I play Go, and have since I learnt about the game when it was discussed in my Computer Science degree course (then computers were considered 50+ years away from beating humans).

Overall, AlphaGo has been a good thing for human players, with it validating a lot of what we thought was right, but also that some tactics we'd thought not worth playing do work out. Having a superhuman, free advisor has made improving much easier.

The negatives include that there's less individual style amongst those that play like AIs, and also that it's easier to cheat at the game.

As in chess, humans have been outclassed by computers in Go for years now, but that doesn't stop us playing and enjoying it.

this is not debate club, and that sound you didn't hear on account of your noise-cancelling headphones was you missing your stop