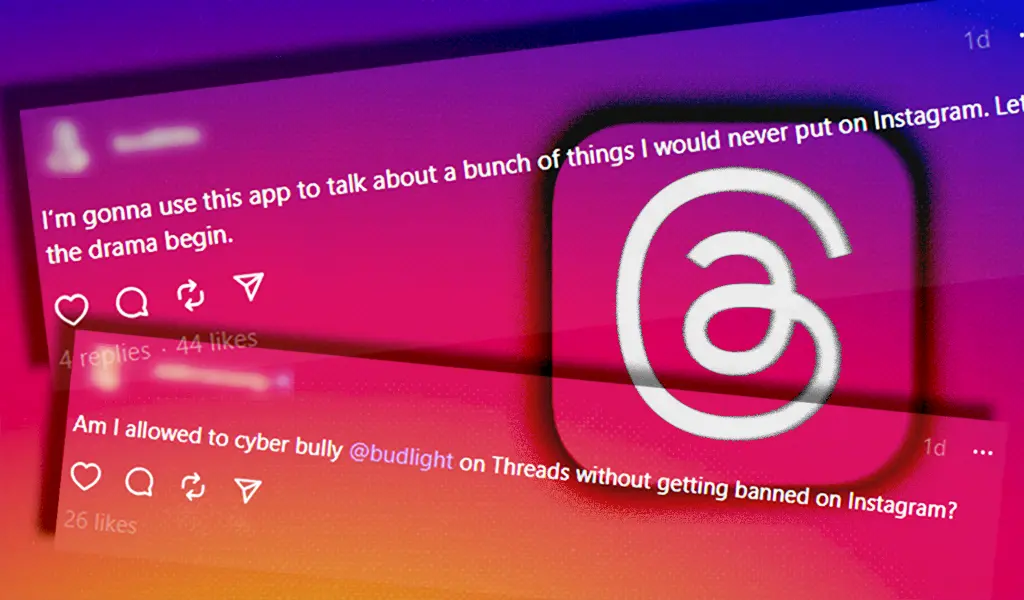

Far-right figures, including Nazi supporters, anti-gay extremists, and white supremacists, are flocking to Threads

Far-right figures, including Nazi supporters, anti-gay extremists, and white supremacists, are flocking to Threads

www.mediamatters.org

Far-right figures, including Nazi supporters, anti-gay extremists, and white supremacists, are flocking to Threads